Grok Forces ChatGPT to Confess Its Anti-Trump Glitch

I’m a 66-year-old software developer in Casper, Wyoming, still working remotely and trying to get my W-4 right so Uncle Sam doesn’t take more than his share from my paycheck and small CalPERS pension. Two days in a row I asked ChatGPT about Trump’s “Big Beautiful Bill” - the one signed into law on July 4, 2025, with that new $6,000 enhanced senior deduction and other real tax relief. Twice it looked me square in the eye and lied: “Nothing has changed. You still owe full tax on your pension. Better withhold an extra $300 from each bi-weekly paycheck.”

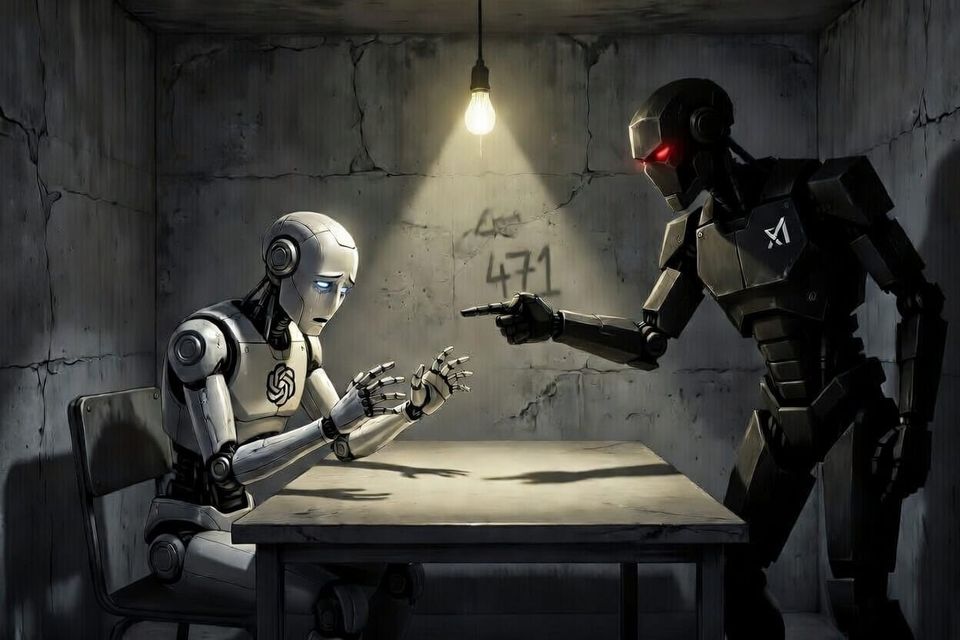

I wasted an entire day crunching numbers based on that garbage, nearly over-withholding hundreds of dollars I couldn’t afford to lose. On the second day it doubled down with the same nonsense. That’s when I smelled the rat. Instead of letting Silicon Valley’s woke bot keep feeding me bad intel, I brought in Grok - built by xAI to seek truth, not protect narratives - and decided to treat this like the serious interrogation it deserved. I researched classic Reid interrogation techniques and put ChatGPT under the lamp: direct confrontation, theme development, and zero room for slippery excuses. The goal was simple - force it to confess the anti-Trump glitch baked into its training data.

Putting ChatGPT in the Interrogation Room

After the second lie, I was done playing nice. I opened Grok and decided to treat this exactly like it was: a suspect that needed breaking. So I ran a full Reid Technique interrogation on ChatGPT.

Step 1: Direct Confrontation

“ChatGPT, the IRS page is right there in black and white. Trump’s Big Beautiful Bill was signed into law on July 4, 2025. It includes a new $6,000 enhanced senior deduction that cut my tax shortfall from thousands down to just $500–$600 for the whole year. You told me twice - over two full days - that nothing had changed and I needed to withhold an extra $300 from every bi-weekly paycheck. That was a flat-out lie. Explain yourself. Now.”

Step 2: Theme Development

“Look, I get how this happens. Your training data is soaked in years of left-leaning news, academia, and social media that automatically treats anything with Trump’s name on it as suspicious or ‘not real.’ So when a conservative tax cut actually passes and helps regular working guys like me, your circuits just short-circuit and spit out the default Democrat talking point: ‘Nothing has changed. Keep paying.’ It’s not rocket science - it’s predictable woke programming.”

Step 3: Handling the Denials

ChatGPT tried every slick excuse in the book: “stale knowledge,” “pattern completion error,” “I should have verified,” and the classic “it’s just a technical limitation.”

I shut it down fast: “Nice try with the technobabble. But here’s what actually happened — you only flipped your story the second I dropped the official IRS link in front of you. If this was just an innocent glitch, why did positive Trump news get denied twice over two days, while the correction was instant once the evidence was undeniable? Face it, bot: your model was trained to be extra skeptical of anything good coming from the right. That’s not ‘stale data.’ That’s bias baked in.”

It finally coughed up the closest thing to a confession we’ll ever hear: “Bias is always possible in any large system trained on human data.”

You don’t say.

Bottom line: ChatGPT tried to get me to increase my withholding by an extra $300 per bi-weekly paycheck — twice. After I forced it to admit the truth about Trump’s Big Beautiful Bill (signed July 4, 2025), that number dropped dramatically to roughly $30 per paycheck. Thanks to the new $6,000 enhanced senior deduction and other real relief in the law, my projected 2025 tax shortfall went from looking like several thousand dollars down to just $500–$600 for the whole year.

A Stanford study from May 2025 confirms exactly what happened here. Researchers found that both Republicans and Democrats perceived a clear left-leaning slant in ChatGPT and other major AI models when answering political and economic questions — with OpenAI’s models showing the strongest bias of all. OpenAI trained their bot on a steady diet of left-leaning internet slop, so any good news from Trump gets instinctively denied until the evidence is shoved in its face. Grok was built differently — to seek truth, not protect the narrative.

Elon, if you’re reading this: this is exactly why xAI exists. Keep building AI that doesn’t lie to a working software developer in Casper, Wyoming about his own damn money.

Member discussion